Choosing the Right Model Isn’t the Hard Part - Making It Work in Manufacturing Is

Every month, a new model tops some benchmark. Accuracy improves. Latency drops. But these gains rarely translate directly into industrial AI model performance in real environments. And yet, AI projects in manufacturing still struggle, not because the models aren’t good enough, but because the environments they’re deployed into are far more unforgiving than a benchmark dataset.

The real challenge isn’t picking the right model. It’s making it work reliably in a factory environment, where the stakes are higher, infrastructure is older, and the margin for error is thinner.

The Model Selection Conversation Is Often the Wrong Starting Point

When a manufacturer starts an AI project, the first instinct is often to ask: “Which model should we use?” It’s a reasonable question, but in most cases, it’s premature.

Before model selection even matters, you need to understand what the model will actually run on. Will it be deployed on-premise due to data security requirements? Is the edge device a constrained system with limited memory? Is there intermittent connectivity, or no connection at all? These real-world constraints in AI systems narrow your options more decisively than any accuracy comparison ever will.

A vision model with 96% accuracy in controlled tests might be unusable if it requires hardware the factory floor cannot support. A language model that performs well in the cloud becomes irrelevant if the data it needs cannot leave the site.

Model selection in manufacturing AI has to start with infrastructure reality, not model capability.

Accuracy vs. Speed: A Trade-off That Looks Different on the Line

In most AI discussions, accuracy is treated as the headline metric. In manufacturing, the more important question is often how fast the model needs to be and what happens when it is wrong.

Consider a defect detection system on a high-speed assembly line. If the line runs at 200 units per minute, the model has less than 300 milliseconds to inspect each unit, log results, and trigger action. A model that is slightly more accurate but takes 500 milliseconds is not better. It is unusable.

On the other hand, a predictive maintenance model forecasting failures 48 hours in advance has more tolerance for latency. A few extra seconds of processing time is irrelevant when the output drives scheduling rather than real-time decisions.

Industrial AI model performance has to be evaluated in the context of the decision it supports, not in isolation. Accuracy and speed are both variables, and the right balance shifts depending on whether you are dealing with real-time inspection, process optimization, or longer-term anomaly detection.

Complexity vs. Maintainability: The Problem That Shows Up Later

There is a tendency to reach for the most sophisticated model available. More parameters and layers often suggest more capability. But in manufacturing environments, complexity carries a cost that shows up after deployment.

A complex model is harder to debug. When it starts producing unexpected outputs, tracing the cause is difficult. Retraining requires more data, more compute, and more time. Updating it when processes change becomes a heavier effort.

A simpler model with slightly lower accuracy but faster retraining, easier debugging, and better adaptability is often more valuable in practice. AI model trade-offs in manufacturing are not just technical. They are operational. The right model is one your team can actually manage over time.

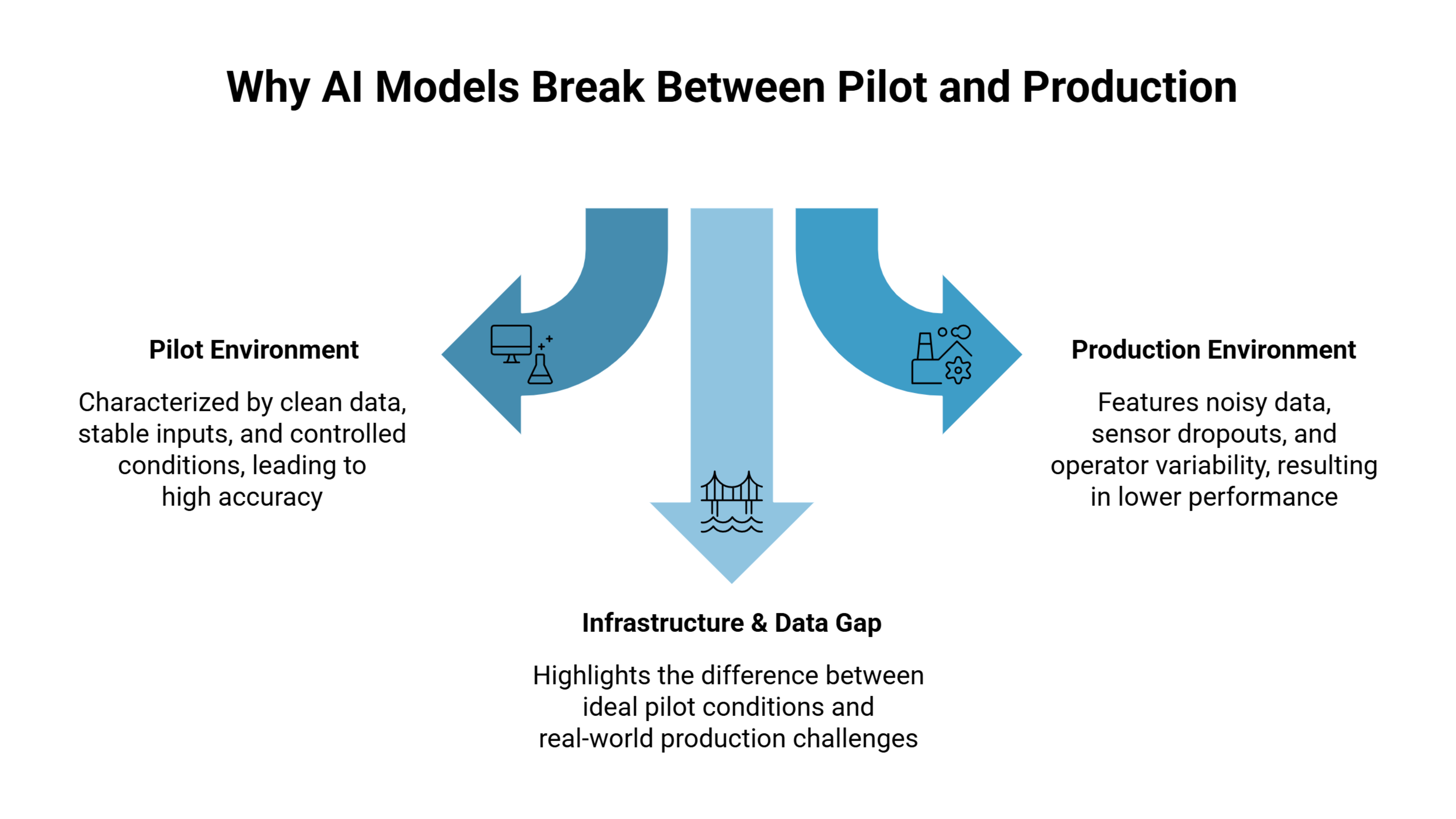

Deploying AI Models in Factories: The Infrastructure Gap

Factory infrastructure was not designed for AI. OT networks are often air-gapped or segmented. PLCs and SCADA systems use protocols that modern AI tools do not natively support. Sensor data often requires preprocessing before it becomes usable.

This creates a gap between where the model runs and where the data exists. Bridging this gap requires integration work that is easy to underestimate.

A common scenario is a model that performs well in a pilot using clean, structured data. In production, it starts receiving inputs from sensors that drop out, produce outliers, or generate inconsistent timestamps. Performance degrades, not because of the model itself, but because of the data pipeline.

Deploying AI models in factories means accounting for the data environment and its limitations. Data quality, pipeline reliability, and system integration often determine success more than model choice.

Real-World Constraints That Rarely Show Up in Pilots

Pilots are optimistic by design. Production is not.

In real environments, shift changes happen and operators interact differently with systems. Product variations are introduced that the model has not seen. Machines get replaced, causing small but meaningful shifts in sensor data.

These are real-world constraints in AI systems that emerge after deployment. They are not model problems in isolation, but they are experienced as model failures.

Teams that handle this well treat deployment as the starting point, not the finish line. They build monitoring early, define drift conditions in advance, and create feedback loops so production issues feed back into model updates.

What “Making It Work” Actually Requires

This is where most AI model decisions are truly tested.

Getting AI to work in manufacturing requires understanding the production environment as a system. This includes data flows, failure points, human interaction, and operational edge cases. It also requires choosing models not just for performance, but for operability.

That includes investing in data pipelines, integration layers, monitoring systems, and retraining workflows. These are not secondary concerns. They are core to making AI usable in production.

Some teams working on this problem, such as those building tools for industrial environments like Seewise, approach it from the production environment outward rather than from the model inward. That shift in perspective often matters more than the model itself.

The model is just one component of a larger system. Choosing a good one is expected. Making it work within the realities of manufacturing is the actual challenge, and it is the one that deserves more attention.